For those of you that checked your Google Search Console (formerly known as Webmaster Tools) on Tuesday July 28, you might have been surprised to see a new warning, “Google Warning: ‘Googlebot cannot access CSS and JS files on {your domain}.'” For sites that employ Javascript and CSS coding, this may have been alarming.

In this post I will try answer some of the most common questions asked by our clients regarding this warning and what you should do when you see it.

What does this warning mean?

It is saying that Google cannot crawl your whole site, and that your site is specifically blocking Javascript and CSS files. This is a warning — not a penalty — but Google is saying that this could result in a drop in your rankings.

Where do I find the warning?

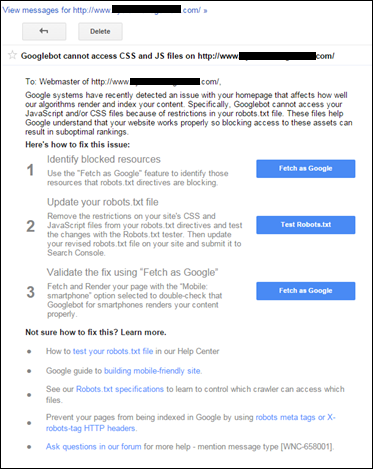

You may have received an email from Google Search Console. Otherwise, you can search under find the warning under the messages link, as shown below.

What percentage of users received this warning?

It looks like about 5% of our customers received this warning. This makes us think that hundreds of thousands of webmasters must have received this message.

Should you be concerned?

This is a warning and not a penalty but any warning that suggests that you may receive ‘suboptimal’ rankings is something to be taken seriously.

What do you do to correct this error?

Luckily, Google has provided a a step-by-step guide (pictured on the right) as to how to correct these Googlebot crawl errors.

In short, editing your robots.txt file will fix the issue and make the warning in your Google Search Console disappear.

How can you check whether you have resolved the issue?

Once you have made the changes to robots.txt, you can verify that Googlebot can crawl your site by checking here.

If the Googlebot is still getting blocked, you will need to go back to robots.txt and make additional changes until the issue is corrected.

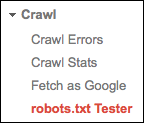

I would also recommend that you use the robots.txt testing tool (pictured below) in Google Search Console to determine if you are experiencing any other issues.

In a SearchEngine Journal article by Matt Southern, he recommends that you remove any disallow lines of code like this.

In a SearchEngine Journal article by Matt Southern, he recommends that you remove any disallow lines of code like this.

- Disallow: /.js$*

- Disallow: /.inc$*

- Disallow: /.css$*

- Disallow: /.php$*

Where do I get more information on what is blocking Googlebot?

You can also go to the section “Blocked Resources” in Google Search Console. You can click on each page of the blocked resources and it will give you a better ideas of each of the issues on each page that is blocked.

Are all the warnings real?

We have definitely seen some false positive results that have been triggered by this warning. These include sections of the website that should not be crawled by Google Bot or are third party resources that are beyond the webmasters control.

Many of our WordPress clients are getting errors by excluding plugins and access to admin. These disallow statements are clearly correct and we hope that Google will refine this warning to not confuse webmasters.

Is this something new?

No. Matt Cutts referred to this issue in 2012.

Google has been telling webmasters to not block CSS & JavaScript for years and years. Below we’ve included a video of Matt Cutts talking on the subject in 2012.

Google also refers to this restriction in their webmaster guidelines and updated their technical guidelines to refer to this change.

What can I do to stop this from happening?

If you don’t want to have to worry about technical glitches like these in the future, then you should consider a full-service organic search campaign. We fixed this problem for many of our clients before they were even aware it was happening, and we can do the same for you.

Further References

– Robots meta tag and X-Robots-Tag HTTP header specifications